Note: the version of this post on Medium has larger images and other benefits – I recommend you read it there.

On Thursday morning last week, Apple sent me a review unit of the new MacBook Pro with Touch Bar for testing. I’ve been using it almost non-stop since, to try to put it through its paces and evaluate this latest power laptop from Apple. I’ve only had four days with it, and so this is probably best seen as a set of early impressions rather than a thoroughgoing review, but here are my thoughts on using it so far. I’ll cover quite a few bases here, but my main focus will be on addressing two particular issues which I suspect people will have the most questions about: the Touch Bar and the power of this computer to do heavy duty work.

The model I’m using

First off, here’s the model I’m using:

In short, this is the 15-inch version, with 16GB of RAM, but it’s not the highest-end model. There is a version with a 2.9GHz processor and a Radeon Pro 460 graphics card, which would be a good bit more powerful for some tasks than the machine I’m using, though the RAM on that computer is the same.

I’m coming to this experience from using two main Macs over the past couple of years. When I’m at my desk, I’m typically using a 2010-version Mac Pro with 32GB of RAM, a processor with 12 2.66GHz cores, a massive SSD, and a Radeon GPU. When I’m mobile, I’m using a MacBook Air from a couple of years ago, with 4GB of memory and an Intel graphics card. In most respects, at least on paper, this MBP is a big step up on the MBA, but is less powerful than the Mac Pro, with the exception of the graphics card.

The Touch Bar

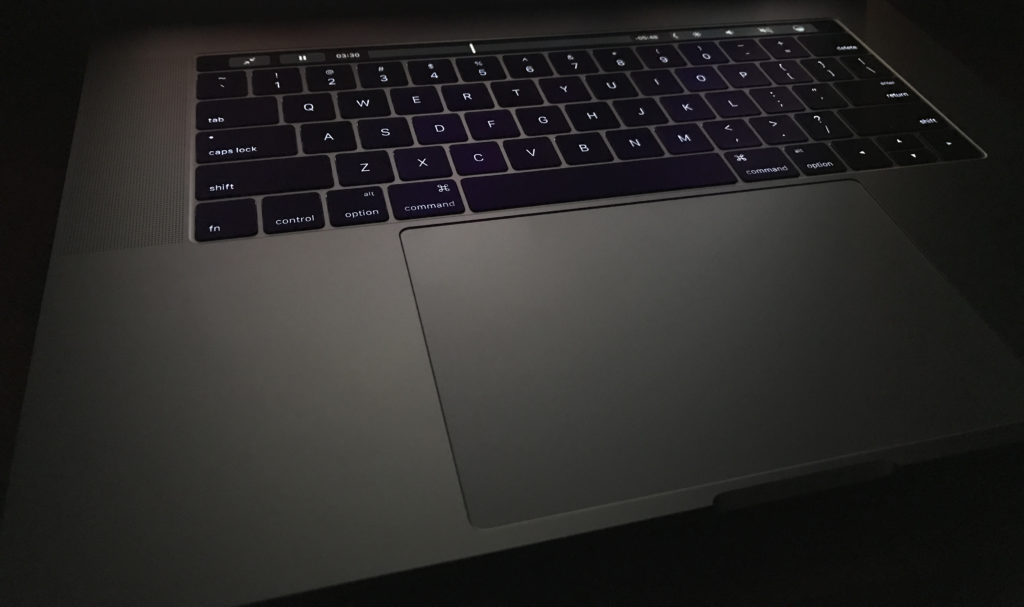

So let’s start with the Touch Bar. I had a chance to play around with the Touch Bar a bit at the launch event, and found it intriguing. It was already clear then that this was the kind of feature that could save time and make workflows easier if done right, but that would also come with a learning curve, and my first few days using it more intensively have confirmed both of those perceptions.

An analogy

The best analogy I can think of is learning to touch type. My oldest daughter has recently gone through this process, and I remember going through it when I was about the same age. Before you start learning, you’ve probably got pretty good at the hunt-and-peck method, and may even be quite fast. When you start learning to touch type, a lot of it is about forcing yourself to change your habits, which can be painful. At first, you’re probably slower than before, and the temptation is to go back to doing what you’ve always done, because if feels like you’re going backwards. But over time, as you master the skill, you get faster and faster, and it feels even more natural. You’re also able to stay in the flow much better, watching the screen rather than the keys.

Learning to use the Touch Bar is a lot like that. If you already use a Mac regularly, you likely have pretty well-established workflows, combining mouse or trackpad actions, typing, and keyboard shortcuts. Suddenly, the Touch Bar comes along and gives you new ways of doing some of the things you’ve always done a certain way. A few may replace keyboard shortcuts, but the vast majority will instead be replacements for mouse or trackpad actions. The first step is remembering that these options are now available. The Touch Bar is quite bright enough to see in any lighting conditions, but it’s not intended to be distracting, so although you may be vaguely aware of it in your peripheral vision as you’re looking at the screen, it doesn’t draw your eye. You have to consciously remember to use it, a bit like how you have to consciously remember to use all your fingers when you’re learning to touch type.

At first, your instinct is to just keep doing things the way you’ve always done them. But then you start to realize that the repetitive task you’re doing by moving the mouse cursor away from the object you’re working with to the taskbar or to the Format pane at the side of the window could be accomplished much more easily by just pressing a button in the Touch Bar. You try it and it works great. The next time you do it a little more quickly, and pretty soon it’s a habit. That first couple of times it may take more time than your old method, because you’re having to break the old habit, but you quickly develop a new, more efficient, habit. Your mouse cursor stays by the object you’re working with (or out of the way entirely) and you go on with your work. I’ve been integrating the Touch Bar into some of my workflows over the last few days, and it’s now starting to become natural and I’m getting to the stage where things are faster than they were before.

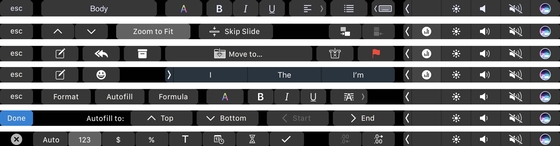

Below are some samples that show the adaptability of the Touch Bar:

This adaptability is one of the strengths of the Touch Bar — the way it morphs not just between apps but based on the context within each app too. The video below shows several examples in quick succession as I move between apps and between contexts within apps. You’ll see how rapidly it changes as I go through these (there’s no sound on the video):

Most of the buttons are either self-explanatory or familiar enough to be intuitive, but I did find a couple of cases where I simply had no idea what a button meant. Since you can’t hover over these buttons in the way you can an on-screen button, there’s really no way to find out either, which can be tricky.

Ultimately, as I’ve written previously, the Touch Bar represents a different philosophical approach to touch on laptops by Apple compared with Microsoft’s all-touch approach to computers. I’ve used a few Windows laptops with touch, and though there have been times when it was useful, it’s often frustrating – the screen tends to bounce away from you when you jab it with your finger and touch targets are often too small. Apple’s approach keeps the horizontal and vertical planes separate – the vertical plane on a MacBook is purely a display, while the horizontal plane is the one you interact with. This is easier on your hands and arms, and allows you to work more quickly because everything is within easy reach. The trackpads on Apple’s laptops have brought some of the benefits of touch to laptops over the last few years, and the Touch Bar takes this a step further.

Third party support

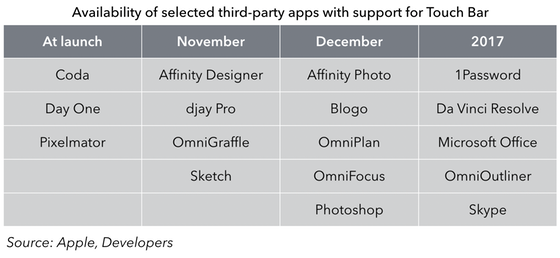

For now, the Touch Bar is only available in first-party applications on the Mac, and most of Apple’s own apps now support it. However, if you’re a typical Mac user it’s quite likely that you spend a fair amount of time in third-party apps, and that’s certainly the case with me. I spend a lot of my time on the Mac in Tweetbot and Evernote, for example, neither of which support the Touch Bar yet, except for auto-correction when typing, which is universal.

Apple demoed some third party apps with Touch Bar integration at its launch event, and below is a table of those apps whose developers have committed to supporting it so far:

For now, users will be able to take advantage of Touch Bar inside the Apple apps and a handful of others, and that will mean adapting some workflows but not others. The experience here is going to be like the early days of 3D Touch support on the iPhone – it will be nice to have for the apps where it’s available, but there will be a lot of apps where it doesn’t work yet. In some cases, that’s going to push users towards apps that do support the feature, as was the case with 3D Touch. And since support is relatively easy to build, I would guess many developers will get on board quickly once the laptops are out.

Touch ID

Since the Touch ID sensor is part of the Touch Bar strip, it’s worth mentioning that briefly too. For anyone who’s used Touch ID on an iPhone or iPad, the value proposition will be fairly obvious – this is a great way to unlock your device without using a password. To be sure, people probably unlock their laptops many fewer times per day than they do their phones, but it’s still a handy time-saver. I’ve had Apple Watch unlock set up on my MacBook Air for a few weeks, and found that useful, but didn’t feel the need to set it up on this MacBook Pro because Touch ID is actually faster.

But Touch ID goes beyond just unlocking — it can also be used for various other functions where you’d normally enter your system password, including certain app installations and system changes. When it’s available, an indicator shows up in the Touch Bar strip pointing to the sensor, which is handy, because it can’t always be used in place of a password.

Siri

It’s also worth discussing the Siri button that’s part of the Touch Bar too. I’ve been using Sierra on my existing Macs for a couple of months now, but haven’t made much use of Siri, in part because I can never remember which hot key I’ve set to invoke it, and clicking on the on-screen Siri button in the taskbar is too much trouble. Having a dedicated Siri button is definitely making me use Siri more.

Power and performance

On, then, to power and performance. I gave you the specs for the machine I’m testing earlier – it’s not the top of the line model, but given some of the commentary from the professional community and those claiming to speak on their behalf over the last couple of weeks, I wanted to put this side of the MacBook Pro to the test.

Testing

I’m not a regular user of heavy-duty creative apps, but I have used Final Cut Pro fairly extensively in the past, and have an Adobe Creative Cloud subscription which gives me access to other apps like Photoshop, Lightroom, Premiere, and Illustrator, some of which I use occasionally. As a first test, I imported some 4K video shot on my iPhone into the new version of Final Cut Pro and edited it. I checked all the boxes for analysis in the importing process, but it still completed quickly and without slowing down the computer. Both Final Cut and the other apps I had open continued to perform smoothly during the analysis and background tasks. The editing was smooth, and I got to use the new Touch Bar buttons at several points, adding in titles, transitions, and other elements, and then exported the file. Everything was quick and smooth, and the experience was very comparable to what I’m used to on my Mac Pro, which is where I’ve mostly used FCP in the past.

Next, I decided to push things a little harder and shot a longer 4K video while riding my bike. The bike was bumping around all over the place while recording, and as a result there was lots of movement and also rolling shutter issues in the video. I imported this video into Adobe Premiere, and then used the Warp Stabilizer effect to try to smooth out some of those issues. This task took quite a bit longer, but again the computer continued to function just fine while the task was underway, even when I simultaneously opened up Lightroom and imported several hundred RAW images from my DSLR. The fans did spin up during the Premiere background tasks, but I’ve noticed they’re quite a bit quieter on this new MacBook than on past MacBooks I’ve used, which I’d guess is due to the new fan design.

There is no doubt in my mind that this MacBook Pro is perfectly capable of handling heavy duty professional creative work. That’s not to say that a computer with more cores, more RAM, or an upgraded graphics card couldn’t do some of these tasks faster, but many creative professionals will have a stationary machine like a Mac Pro, an iMac, or something else back at their desk and will use the MBP when they’re on the go.

Input from creative professionals

As I mentioned, I’m not a creative professional, but I happen to have married into a family of them, so I checked in with three of my brothers in law who work as video professionals (two as editors and one as a producer). I asked them several questions about the hardware and software they use, their workflows, and attitudes towards these things in their places of work. Both the editors are currently using 5K iMacs with 32GB of RAM, and mostly use Adobe Premiere or Avid for editing (Final Cut Pro has fallen out of favor with the pro video editing crowd since the FCP X release, though at least one of them said that he expected the latest update to win some former users back to the Apple side). This MacBook Pro, which maxes out at 16GB, wouldn’t match the performance of one of those 5K iMacs, but could well be the kind of machine they’d take with them if they were editing or reviewing footage on set. And with the ability to drive two 5K monitors, they could even finish the job when back at the office on the same computer. It wouldn’t perhaps be as fast at some of the background tasks as an iMac or Mac Pro, but it would allow them to do the job just fine, and I think that’s the proper way to see this computer.

Portability

That brings me to the next thing that’s worth talking about, which is portability. The new 13″ MacBook Pro is being positioned as a successor of sorts to the 13″ MacBook Air — it has a similar footprint and weighs about the same, yet is far more powerful. This 15″ MacBook Pro, of course, is larger (and potentially even more powerful), and so obviously not to be seen as a direct replacement for the Air. But as that’s the transition that I’m making personally, it makes sense to make that comparison at least briefly. The MBP is clearly heavier and larger than the MBA, though not by as much as you might think. It weighs a pound more — 4 pounds versus 3 — but the footprint is very similar, and it’s actually thinner than the MBA at its thickest point. And of course it has four times the pixels on the screen. The images below should give you some sense of the size comparison:

The true comparison, of course, is to the earlier 15″ MacBook Pro, which is roughly half a pound heavier and slightly thicker. I actually have an older 15″ MacBook Pro around as well, from about five or six years ago, and this thing is night and day from a size and weight perspective. Long story short, this is a very portable laptop, less so certainly than the 13″ one, but more so than any other 15″ Apple has ever made, and likely more so than most other 15″ laptops on the market today. And yet it has the power I talked about earlier.

Keyboard, Screen, and Audio

Three other hardware features are worth discussing at least briefly here.

Firstly, the keyboard. This keyboard takes the same approach as the keyboard on the 12″ MacBook, but is a new version which has a different dome switch which allows for more of a springy feel. I haven’t used the MacBook keyboard extensively, but this keyboard has been totally fine for me. I adjusted to it almost immediately, and it feels fine. I have noticed that typing on it is a little noisy, I think because I’m using as much weight as I have used in the past on laptops with more key travel, and so I’m slowly adjusting my weight, which is resulting in a quieter experience.

The screen on this thing is beautiful. Apple now has P3 color on its newest iPhones, iPads, and MacBook Pros, and it’s a really nice improvement. I took some pictures of the Pro next to the Air to try to capture this, but it’s hard to get right in a photograph. However, looking at them side by side, there is both deeper color and a noticeably brighter screen on the Pro. And of course it’s a Retina display too, so the screen looks much sharper too. The combination of the Retina resolution and the brightness and color gamut make it really nice for watching videos. I spent some time over the weekend watching a variety of video on it, and it was one of the nicest displays I’ve ever used for this.

Lastly, the sound. The new MacBook Pro has different speakers, and they’re quite a bit louder than on the MacBook Air. In my office, I have a stereo hooked up to an AirPort Express for AirPlay and play all my music that way, but the new Pro will do fine even on its own for sound volume and quality. I tested with a random iTunes track, as you can hear in the audio clip below. I recorded using an iPhone placed between the two laptops.

The sound quality is noticeably louder and fuller on the MacBook Pro, as I hope you can hear in that sample. Again, this makes it perfect for watching movies in your spare time, as well as for listening to music.

Ports and adapters

Another thing I’ve seen some concern about with this new MacBook is the ports, all four of which are Thunderbolt 3 / USB-C. That’s a new port for me – I’ve never owned a computer with a USB-C port, though two of the smartphones I’ve tested recently (the Google Pixel and LeEco Pro3) have USB-C charging. As a result, I was interested to see how I’d get by with my existing peripherals.

I made a trip to the Apple Store and picked up a few adapters:

- Two USB-A to USB-C adapters for my USB peripherals

- A Thunderbolt 2 to Thunderbolt 3 adapter for my Thunderbolt display

- A USB-C Digital AV Multiport Adapter for another display that uses HDMI.

Of course, all these adapters are discounted until the end of the year, which was nice because cost adds up fast on some of these. All of them worked fine, and I’ve appreciated being able to plug in any of these various peripherals on either side. It’s particularly nice to be able to shift power from side to side based on where the nearest outlet is.

This is a classic Apple situation – removing ports before the world has necessarily moved on, in part as an attempt to move people along. But in this case Apple is particularly far ahead of the market, and so these adapters are a concession to that reality. Some people will already have USB-C or Thunderbolt 3 peripherals such as hard drives, and these will become increasingly common over the next few years. Along with the adapters, Apple sells a variety of LaCie, G-Tech, and Sandisk storage devices and the LG displays, which support USB-C natively.

But for now, we’re going to be using adapters when we use a number of existing peripherals. I already have a pocket full of adapters in my work bag for my MacBook Air, for presenting, using Ethernet cables, and so on, so I’m used to this situation. And as I pointed out on Twitter recently, even if you buy all the adapters Apple recommends as you go through the buying process for a new MacBook Pro, the cost is a tiny fraction of the total (and of course less than full price between now and December 31). I will say that it feels a bit odd with a brand new iPhone and a brand new computer not to be able to plug one into the other out of the box, though I suspect many users no longer plug their iPhones into their computers at all.

Design

This is the first MacBook Pro to be available in Space Gray, and it’s a nice new option (this is the one Apple sent me, and in person it looks darker than in most of the pictures in this post). It’s sleek looking, and smudges and scratches will show up a lot less on this surface than on the bright silver surface of earlier MacBooks. It’s a good looking computer overall too, regardless of the finish. The display takes up much of the vertical plane, with fairly small bezels (one of the ways Apple was able to shrink the footprint), while the horizontal plane looks really good with the addition of the Touch Bar and a larger trackpad.

I’ve found that trackpad to be totally fine, by the way — even though it’s consistently under the heels of my hands, I’ve never once accidentally moved the cursor or clicked on anything while typing because of it. I will say that I use the bottom right corner for right clicking and that’s now a long way from the center of the trackpad, which has resulted in some failed right-clicks when I haven’t moved far enough with my fingers. If you tend to use Control-click instead of bottom-right click, then this obviously won’t be an issue. I have also noticed that if the laptop is resting on my lap rather than on a table, there’s something about the angle of my hand on the trackpad that sometimes accidentally right clicks when I’m trying to click in the center of the trackpad, because another part of my hand is resting on the bottom right corner of the trackpad. This happens because the trackpad is really very close to the edge of the computer now on the side closest to you, so that the heel of your hand can easily stray onto the trackpad when resting on the edge.

Miscellaneous glitches

I did have one or two glitches here and there. For the first day and a half I was using the MacBook Pro, it would lose WiFi connectivity when it went to sleep, and fail to reconnect. After a restart, this issue seemed to resolve. Secondly, while I left Adobe Premiere processing video and stepped away for a few minutes, the computer went to sleep, and when I woke it, the whole computer did a hard crash, restarting out of the blue. Lastly, I had an occasion when the computer hung to the extent that I had to restart it.

I’m not used to having these issues regularly on Macs, though I’ve experienced each of them on occasion in the past. It was odd to have these happen in quick succession, and I’m not sure what to ascribe that to – Apple says it hasn’t seen these issues itself in testing. I will say that none of these issues has happened twice, but I’ll be watching for more of this stuff to see if these were just flukes.

Conclusions

This is a really solid new laptop from Apple. I wrote after the launch event that Apple now has the most logical lineup of laptops it’s had in a long time, with a clear progression in terms of power, portability, and price. Even within the new MacBook Pro range, there are size, power, and feature options. But all of these are intended to be pro computers.

That’s not to say they’re all intended to be the only computer someone who uses heavy-duty creative apps needs – the Mac Pro and iMac are there at least in part to meet those needs. But these are computers that the vast majority of people who use a Mac for work would be fine to use as their only machine – that’s certainly the case for me. This 15″ version I’ve been testing is slightly less portable than the 13″ version, but can be significantly more powerful, and could handle pretty much any video or photo editing task you’d want to throw at it. Yes, there are desktops including Apple’s that could perform some of those tasks more quickly, but this laptop is intended for someone who needs portability too, and that’s the point here. Every computing device involves compromises – here, portability has been prioritized over raw power, but not in such a way that makes this computer useless for powerful tasks.

All that would be true even if the Touch Bar didn’t exist, and yet it does. It’s a really nice addition to what’s already a great computer, and once you get some way along the learning curve it really speeds up tasks and makes life easier on your hands. As third party developers embrace it, it’ll be even more universally useful, and I wouldn’t be surprised if we see some developers using the Touch Bar in really innovative ways within their apps. Can you live without it? Absolutely – all of us have until now. But it’s a great addition if you’re in the market for a new laptop.